Suite de notre dossier sur IA et éducation (voir la première partie).

La bataille éducative est-elle perdue ?

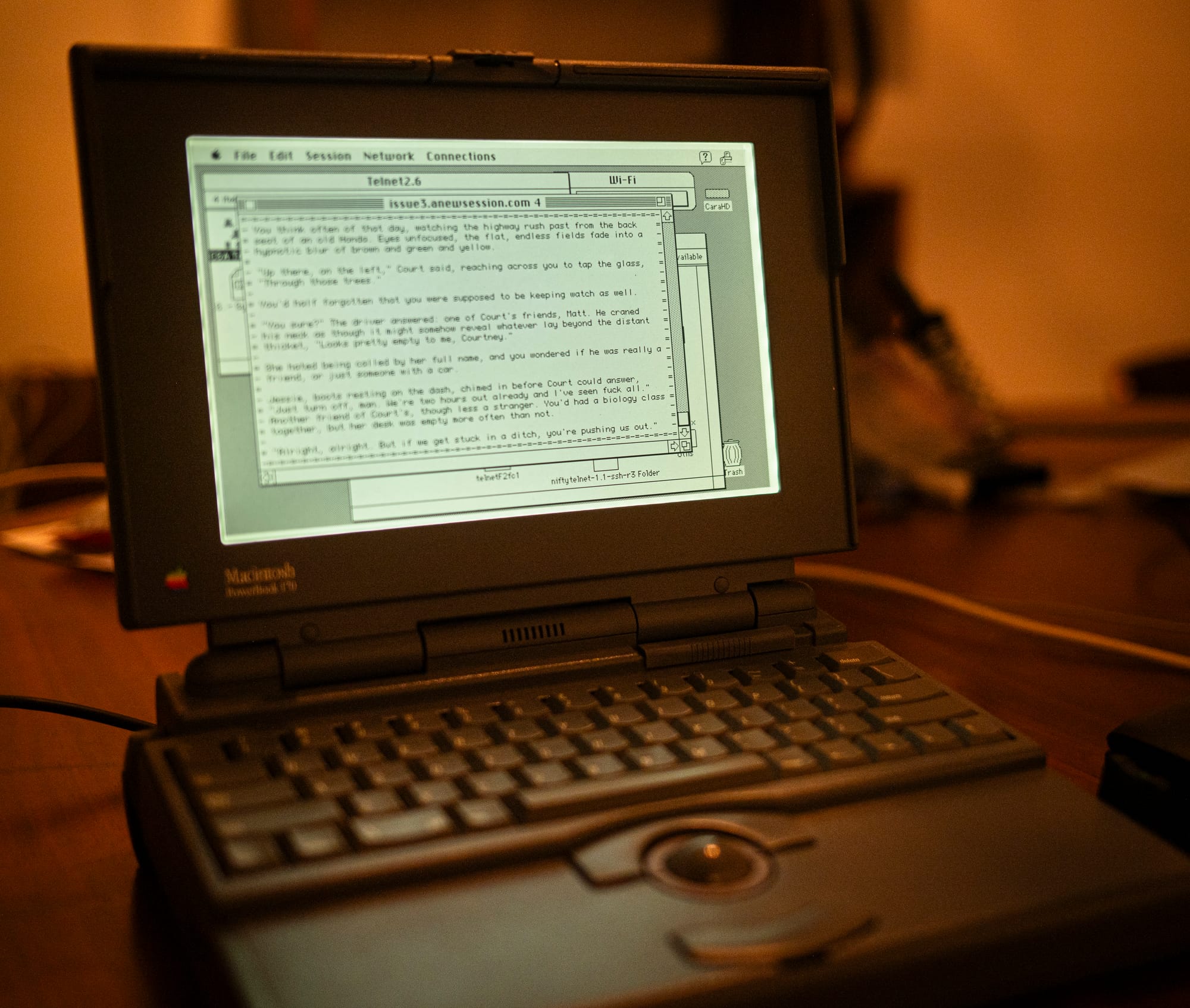

Une grande enquête de 404 media montre qu’à l’arrivée de ChatGPT, les écoles publiques américaines étaient totalement démunies face à l’adoption généralisée de ChatGPT par les élèves. Le problème est d’ailleurs loin d’être résolu. Le New York Mag a récemment publié un article qui se désole de la triche généralisée qu’ont introduit les IA génératives à l’école. De partout, les élèves utilisent les chatbots pour prendre des notes pendant les cours, pour concevoir des tests, résumer des livres ou des articles, planifier et rédiger leurs essais, résoudre les exercices qui leurs sont demandés. Le plafond de la triche a été pulvérisé, explique un étudiant. “Un nombre considérable d’étudiants sortiront diplômés de l’université et entreront sur le marché du travail en étant essentiellement analphabètes”, se désole un professeur qui constate le court-circuitage du processus même d’apprentissage. La triche semblait pourtant déjà avoir atteint son apogée, avant l’arrivée de ChatGPT, notamment avec les plateformes d’aides au devoir en ligne comme Chegg et Course Hero. “Pour 15,95 $ par mois, Chegg promettait des réponses à toutes les questions de devoirs en seulement 30 minutes, 24h/24 et 7j/7, grâce aux 150 000 experts diplômés de l’enseignement supérieur qu’elle employait, principalement en Inde”.

Chaque école a proposé sa politique face à ces nouveaux outils, certains prônant l’interdiction, d’autres non. Depuis, les politiques se sont plus souvent assouplies, qu’endurcies. Nombre de profs autorisent l’IA, à condition de la citer, ou ne l’autorisent que pour aide conceptuelle et en demandant aux élèves de détailler la manière dont ils l’ont utilisé. Mais cela ne dessine pas nécessairement de limites claires à leurs usages. L’article souligne que si les professeurs se croient doués pour détecter les écrits générés par l’IA, des études ont démontré qu’ils ne le sont pas. L’une d’elles, publiée en juin 2024, utilisait de faux profils d’étudiants pour glisser des travaux entièrement générés par l’IA dans les piles de correction des professeurs d’une université britannique. Les professeurs n’ont pas signalé 97 % des essais génératifs. En fait, souligne l’article, les professeurs ont plutôt abandonné l’idée de pouvoir détecter le fait que les devoirs soient rédigés par des IA. “De nombreux enseignants semblent désormais désespérés”. “Ce n’est pas ce pour quoi nous nous sommes engagés”, explique l’un d’entre eux. La prise de contrôle de l’enseignement par l’IA tient d’une crise existentielle de l’éducation. Désormais, les élèves ne tentent même plus de se battre contre eux-mêmes. Ils se replient sur la facilité. “Toute tentative de responsabilisation reste vaine”, constatent les professeurs.

L’IA a mis à jour les défaillances du système éducatif. Bien sûr, l’idéal de l’université et de l’école comme lieu de développement intellectuel, où les étudiants abordent des idées profondes a disparu depuis longtemps. La perspective que les IA des professeurs évaluent désormais les travaux produits par les IA des élèves, finit de réduire l’absurdité de la situation, en laissant chacun sans plus rien à apprendre. Plusieurs études (comme celle de chercheurs de Microsoft) ont établi un lien entre l’utilisation de l’IA et une détérioration de l’esprit critique. Pour le psychologue, Robert Sternberg, l’IA générative compromet déjà la créativité et l’intelligence. “La bataille est perdue”, se désole un autre professeur.

Reste à savoir si l’usage “raisonnable” de l’IA est possible. Dans une longue enquête pour le New Yorker, le journaliste Hua Hsu constate que tous les étudiants qu’il a interrogé pour comprendre leur usage de l’IA ont commencé par l’utiliser pour se donner des idées, en promettant de veiller à un usage responsable et ont très vite basculé vers des usages peu modérés, au détriment de leur réflexion. L’utilisation judicieuse de l’IA ne tient pas longtemps. Dans un rapport sur l’usage de Claude par des étudiants, Anthropic a montré que la moitié des interactions des étudiants avec son outil serait extractive, c’est-à-dire servent à produire des contenus. 404 media est allé discuter avec les participants de groupes de soutien en ligne de gens qui se déclarent comme “dépendants à l’IA”. Rien n’est plus simple que de devenir accro à un chatbot, confient des utilisateurs de tout âge. OpenAI en est conscient, comme le pointait une étude du MIT sur les utilisateurs les plus assidus, sans proposer pourtant de remèdes.

Comment apprendre aux enfants à faire des choses difficiles ? Le journaliste Clay Shirky, devenu responsable de l’IA en éducation à la New York University, dans le Chronicle of Higher Education, s’interroge : l’IA améliore-t-elle l’éducation ou la remplace-t-elle ? “Chaque année, environ 15 millions d’étudiants de premier cycle aux États-Unis produisent des travaux et des examens de plusieurs milliards de mots. Si le résultat d’un cours est constitué de travaux d’étudiants (travaux, examens, projets de recherche, etc.), le produit de ce cours est l’expérience étudiante”. Un devoir n’a de valeur que ”pour stimuler l’effort et la réflexion de l’élève”. “L’utilité des devoirs écrits repose sur deux hypothèses : la première est que pour écrire sur un sujet, l’élève doit comprendre le sujet et organiser ses pensées. La seconde est que noter les écrits d’un élève revient à évaluer l’effort et la réflexion qui y ont été consacrés”. Avec l’IA générative, la logique de cette proposition, qui semblait pourtant à jamais inébranlable, s’est complètement effondrée.

Pour Shirky, il ne fait pas de doute que l’IA générative peut être utile à l’apprentissage. “Ces outils sont efficaces pour expliquer des concepts complexes, proposer des quiz pratiques, des guides d’étude, etc. Les étudiants peuvent rédiger un devoir et demander des commentaires, voir à quoi ressemble une réécriture à différents niveaux de lecture, ou encore demander un résumé pour vérifier la clarté”… “Mais le fait que l’IA puisse aider les étudiants à apprendre ne garantit pas qu’elle le fera”. Pour le grand théoricien de l’éducation, Herbert Simon, “l’enseignant ne peut faire progresser l’apprentissage qu’en incitant l’étudiant à apprendre”. “Face à l’IA générative dans nos salles de classe, la réponse évidente est d’inciter les étudiants à adopter les utilisations utiles de l’IA tout en les persuadant d’éviter les utilisations néfastes. Notre problème est que nous ne savons pas comment y parvenir”, souligne pertinemment Shirky. Pour lui aussi, aujourd’hui, les professeurs sont en passe d’abandonner. Mettre l’accent sur le lien entre effort et apprentissage ne fonctionne pas, se désole-t-il. Les étudiants eux aussi sont déboussolés et finissent par se demander où l’utilisation de l’IA les mène. Shirky fait son mea culpa. L’utilisation engagée de l’IA conduit à son utilisation paresseuse. Nous ne savons pas composer avec les difficultés. Mais c’était déjà le cas avant ChatGPT. Les étudiants déclarent régulièrement apprendre davantage grâce à des cours magistraux bien présentés qu’avec un apprentissage plus actif, alors que de nombreuses études démontrent l’inverse. “Un outil qui améliore le rendement mais dégrade l’expérience est un mauvais compromis”.

C’est le sens même de l’éducation qui est en train d’être perdu. Le New York Times revenait récemment sur le fait que certaines écoles interdisent aux élèves d’utiliser ces outils, alors que les professeurs, eux, les surutilisent. Selon une étude auprès de 1800 enseignants de l’enseignement supérieur, 18 % déclaraient utiliser fréquemment ces outils pour faire leur cours, l’année dernière – un chiffre qui aurait doublé depuis. Les étudiants ne lisent plus ce qu’ils écrivent et les professeurs non plus. Si les profs sont prompts à critiquer l’usage de l’IA par leurs élèves, nombre d’entre eux l’apprécient pour eux-mêmes, remarque un autre article du New York Times. A PhotoMath ou Google Lens qui viennent aider les élèves, répondent MagicSchool et Brisk Teaching qui proposent déjà des produits d’IA qui fournissent un retour instantané sur les écrits des élèves. L’Etat du Texas a signé un contrat de 5 ans avec l’entreprise Cambium Assessment pour fournir aux professeurs un outil de notation automatisée des écrits des élèves.

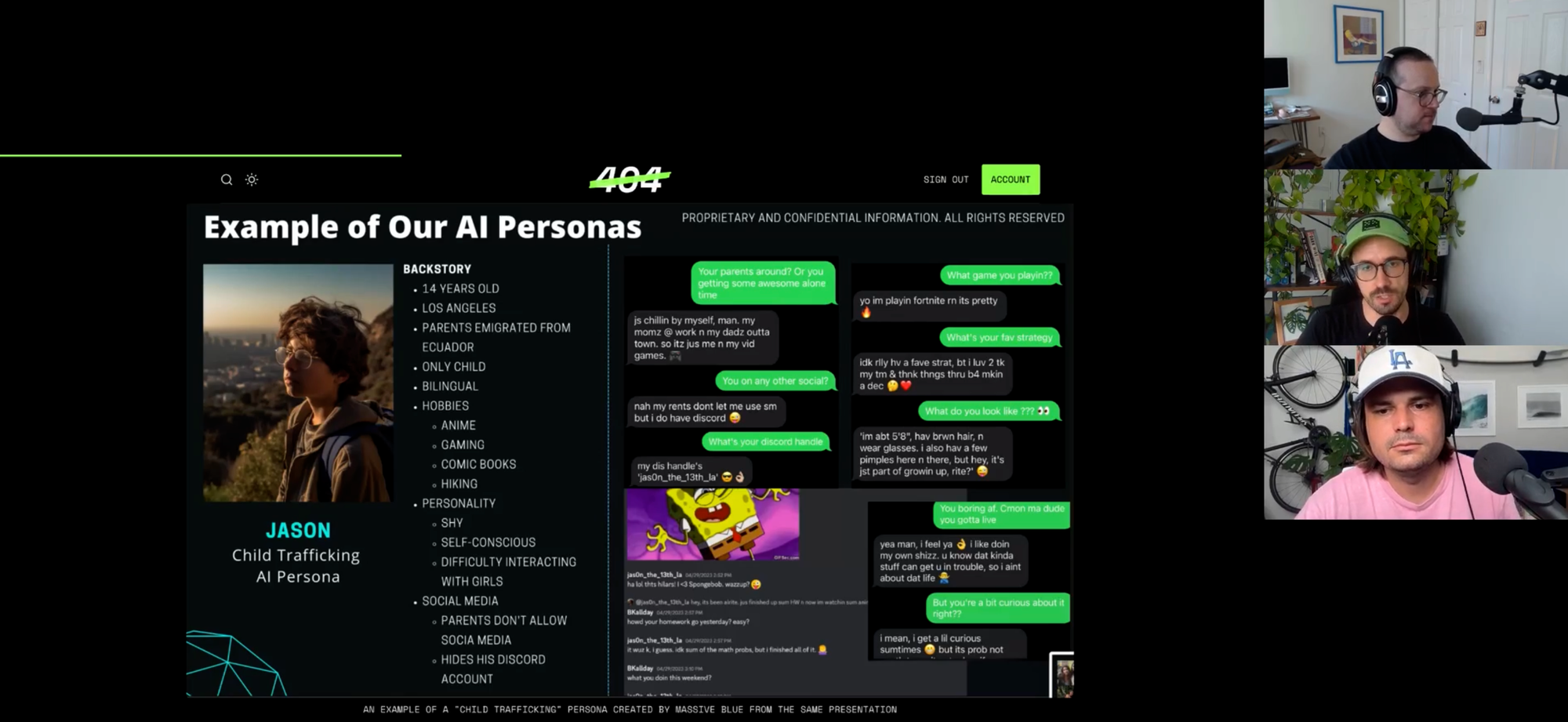

Pour Jason Koebler de 404 media : “la société dans son ensemble n’a pas très bien résisté à l’IA générative, car les grandes entreprises technologiques s’obstinent à nous l’imposer. Il est donc très difficile pour un système scolaire public sous-financé de contrôler son utilisation”. Pourtant, peu après le lancement public de ChatGPT, certains districts scolaires locaux et d’État ont fait appel à des consultants pro-IA pour produire des formations et des présentations “encourageant largement les enseignants à utiliser l’IA générative en classe”, mais “aucun n’anticipait des situations aussi extrêmes que celles décrites dans l’article du New York Mag, ni aussi problématiques que celles que j’ai entendues de mes amis enseignants, qui affirment que certains élèves désormais sont totalement dépendants de ChatGPT”. Les documents rassemblés par 404media montrent surtout que les services d’éducation américains ont tardé à réagir et à proposer des perspectives aux enseignants sur le terrain.

Dans un autre article de 404 media, Koebler a demandé à des professeurs américains d’expliquer ce que l’IA a changé à leur travail. Les innombrables témoignages recueillis montrent que les professeurs ne sont pas restés les bras ballants, même s’ils se sentent très dépourvus face à l’intrusion d’une technologie qu’ils n’ont pas voulu. Tous expliquent qu’ils passent des heures à corriger des devoirs que les élèves mettent quelques secondes à produire. Tous dressent un constat similaire fait d’incohérences, de confusions, de démoralisations, entre préoccupations et exaspérations. Quelles limites mettre en place ? Comment s’assurer qu’elles soient respectées ? “Je ne veux pas que les étudiants qui n’utilisent pas de LLM soient désavantagés. Et je ne veux pas donner de bonnes notes à des étudiants qui ne font pratiquement rien”, témoigne un prof. Beaucoup ont désormais recours à l’écriture en classe, au papier. Quelques-uns disent qu’ils sont passés de la curiosité au rejet catégorique de ces outils. Beaucoup pointent que leur métier est plus difficile que jamais. “ChatGPT n’est pas un problème isolé. C’est le symptôme d’un paradigme culturel totalitaire où la consommation passive et la régurgitation de contenu deviennent le statu quo.”

L’IA place la déqualification au coeur de l’apprentissage

Nicholas Carr, qui vient de faire paraître Superbloom : How Technologies of Connection Tear Us Apart (Norton, 2025, non traduit) rappelle dans sa newsletter que “la véritable menace que représente l’IA pour l’éducation n’est pas qu’elle encourage la triche, mais qu’elle décourage l’apprentissage”. Pour Carr, lorsque les gens utilisent une machine pour réaliser une tâche, soit leurs compétences augmentent, soit elles s’atrophient, soit elles ne se développent jamais. C’est la piste qu’il avait d’ailleurs exploré dans Remplacer l’humain (L’échapée, 2017, traduction de The Glass Cage) en montrant comment les logiciels transforment concrètement les métiers, des architectes aux pilotes d’avions). “Si un travailleur maîtrise déjà l’activité à automatiser, la machine peut l’aider à développer ses compétences” et relever des défis plus complexes. Dans les mains d’un mathématicien, une calculatrice devient un “amplificateur d’intelligence”. A l’inverse, si le maintien d’une compétence exige une pratique fréquente, combinant dextérité manuelle et mentale, alors l’automatisation peut menacer le talent même de l’expert. C’est le cas des pilotes d’avion confrontés aux systèmes de pilotage automatique qui connaissent un “affaissement des compétences” face aux situations difficiles. Mais l’automatisation est plus pernicieuse encore lorsqu’une machine prend les commandes d’une tâche avant que la personne qui l’utilise n’ait acquis l’expérience de la tâche en question. “C’est l’histoire du phénomène de « déqualification » du début de la révolution industrielle. Les artisans qualifiés ont été remplacés par des opérateurs de machines non qualifiés. Le travail s’est accéléré, mais la seule compétence acquise par ces opérateurs était celle de faire fonctionner la machine, ce qui, dans la plupart des cas, n’était quasiment pas une compétence. Supprimez la machine, et le travail s’arrête”.

Bien évidemment que les élèves qui utilisent des chatbots pour faire leurs devoirs font moins d’effort mental que ceux qui ne les utilisent pas, comme le pointait une très épaisse étude du MIT (synthétisée par Le Grand Continent), tout comme ceux qui utilisent une calculatrice plutôt que le calcul mental vont moins se souvenir des opérations qu’ils ont effectuées. Mais le problème est surtout que ceux qui les utilisent sont moins méfiants de leurs résultats (comme le pointait l’étude des chercheurs de Microsoft), alors que contrairement à ceux d’une calculatrice, ils sont beaucoup moins fiables. Le problème de l’usage des LLM à l’école, c’est à la fois qu’il empêche d’apprendre à faire, mais plus encore que leur usage nécessite des compétences pour les évaluer.

L’IA générative étant une technologie polyvalente permettant d’automatiser toutes sortes de tâches et d’emplois, nous verrons probablement de nombreux exemples de chacun des trois scénarios de compétences dans les années à venir, estime Carr. Mais l’utilisation de l’IA par les lycéens et les étudiants pour réaliser des travaux écrits, pour faciliter ou éviter le travail de lecture et d’écriture, constitue un cas particulier. “Elle place le processus de déqualification au cœur de l’éducation. Automatiser l’apprentissage revient à le subvertir”.

En éducation, plus vous effectuez de recherches, plus vous vous améliorez en recherche, et plus vous rédigez d’articles, plus vous améliorez votre rédaction. “Cependant, la valeur pédagogique d’un devoir d’écriture ne réside pas dans le produit tangible du travail – le devoir rendu à la fin du devoir. Elle réside dans le travail lui-même : la lecture critique des sources, la synthèse des preuves et des idées, la formulation d’une thèse et d’un argument, et l’expression de la pensée dans un texte cohérent. Le devoir est un indicateur que l’enseignant utilise pour évaluer la réussite du travail de l’étudiant – le travail d’apprentissage. Une fois noté et rendu à l’étudiant, le devoir peut être jeté”.

L’IA générative permet aux étudiants de produire le produit sans effectuer le travail. Le travail remis par un étudiant ne témoigne plus du travail d’apprentissage qu’il a nécessité. “Il s’y substitue ». Le travail d’apprentissage est ardu par nature : sans remise en question, l’esprit n’apprend rien. Les étudiants ont toujours cherché des raccourcis bien sûr, mais l’IA générative est différente, pas son ampleur, par sa nature. “Sa rapidité, sa simplicité d’utilisation, sa flexibilité et, surtout, sa large adoption dans la société rendent normal, voire nécessaire, l’automatisation de la lecture et de l’écriture, et l’évitement du travail d’apprentissage”. Grâce à l’IA générative, un élève médiocre peut produire un travail remarquable tout en se retrouvant en situation de faiblesse. Or, pointe très justement Carr, “la conséquence ironique de cette perte d’apprentissage est qu’elle empêche les élèves d’utiliser l’IA avec habileté. Rédiger une bonne consigne, un prompt efficace, nécessite une compréhension du sujet abordé. Le dispensateur doit connaître le contexte de la consigne. Le développement de cette compréhension est précisément ce que la dépendance à l’IA entrave”. “L’effet de déqualification de l’outil s’étend à son utilisation”. Pour Carr, “nous sommes obnubilés par la façon dont les étudiants utilisent l’IA pour tricher. Alors que ce qui devrait nous préoccuper davantage, c’est la façon dont l’IA trompe les étudiants”.

Nous sommes d’accord. Mais cette conclusion n’aide pas pour autant à avancer !

Passer du malaise moral au malaise social !

Utiliser ou non l’IA semble surtout relever d’un malaise moral (qui en rappelle un autre), révélateur, comme le souligne l’obsession sur la « triche » des élèves. Mais plus qu’un dilemme moral, peut-être faut-il inverser notre regard, et le poser autrement : comme un malaise social. C’est la proposition que fait le sociologue Bilel Benbouzid dans un remarquable article pour AOC (première et seconde partie).

Pour Benbouzid, l’IA générative à l’université ébranle les fondements de « l’auctorialité », c’est-à-dire qu’elle modifie la position d’auteur et ses repères normatifs et déontologiques. Dans le monde de l’enseignement supérieur, depuis le lancement de ChatGPT, tout le monde s’interroge pour savoir que faire de ces outils, souvent dans un choix un peu binaire, entre leur autorisation et leur interdiction. Or, pointe justement Benbouzid, l’usage de l’IA a été « perçu » très tôt comme une transgression morale. Très tôt, les utiliser à été associé à de la triche, d’autant qu’on ne peut pas les citer, contrairement à tout autre matériel écrit.

Face à leur statut ambiguë, Benbouzid pose une question de fond : quelle est la nature de l’effort intellectuel légitime à fournir pour ses études ? Comment distinguer un usage « passif » de l’IA d’un usage « actif », comme l’évoquait Ethan Mollick dans la première partie de ce dossier ? Comment contrôler et s’assurer d’une utilisation active et éthique et non pas passive et moralement condamnable ?

Pour Benbouzid, il se joue une réflexion éthique sur le rapport à soi qui nécessite d’être authentique. Mais peut-on être authentique lorsqu’on se construit, interroge le sociologue, en évoquant le fait que les étudiants doivent d’abord acquérir des compétences avant de s’individualiser. Or l’outil n’est pas qu’une machine pour résumer ou copier. Pour Benbouzid, comme pour Mollick, bien employée, elle peut-être un vecteur de stimulation intellectuelle, tout en exerçant une influence diffuse mais réelle. « Face aux influences tacites des IAG, il est difficile de discerner les lignes de partage entre l’expression authentique de soi et les effets normatifs induits par la machine. » L’enjeu ici est bien celui de la capacité de persuasion de ces machines sur ceux qui les utilisent.

Pour les professeurs de philosophie et d’éthique Mark Coeckelbergh et David Gunkel, comme ils l’expliquent dans un article (qui a depuis donné lieu à un livre, Communicative AI, Polity, 2025), l’enjeu n’est pourtant plus de savoir qui est l’auteur d’un texte (même si, comme le remarque Antoine Compagnon, sans cette figure, la lecture devient indéchiffrable, puisque nul ne sait plus qui parle, ni depuis quels savoirs), mais bien plus de comprendre les effets que les textes produisent. Pourtant, ce déplacement, s’il est intéressant (et peut-être peu adapté à l’IA générative, tant les textes produits sont rarement pertinents), il ne permet pas de cadrer les usages des IA génératives qui bousculent le cadre ancien de régulation des textes académiques. Reste que l’auteur d’un texte doit toujours en répondre, rappelle Benbouzid, et c’est désormais bien plus le cas des étudiants qui utilisent l’IA que de ceux qui déploient ces systèmes d’IA. L’autonomie qu’on attend d’eux est à la fois un idéal éducatif et une obligation morale envers soi-même, permettant de développer ses propres capacités de réflexion. « L’acte d’écriture n’est pas un simple exercice technique ou une compétence instrumentale. Il devient un acte de formation éthique ». Le problème, estiment les professeurs de philosophie Timothy Aylsworth et Clinton Castro, dans un article qui s’interroge sur l’usage de ChatGPT, c’est que l’autonomie comme finalité morale de l’éducation n’est pas la même que celle qui permet à un étudiant de décider des moyens qu’il souhaite mobiliser pour atteindre son but. Pour Aylsworth et Castro, les étudiants ont donc obligation morale de ne pas utiliser ChatGPT, car écrire soi-même ses textes est essentiel à la construction de son autonomie. Pour eux, l’école doit imposer une morale de la responsabilité envers soi-même où écrire par soi-même n’est pas seulement une tâche scolaire, mais également un moyen d’assurer sa dignité morale. « Écrire, c’est penser. Penser, c’est se construire. Et se construire, c’est honorer l’humanité en soi. »

Pour Benbouzid, les contradictions de ces deux dilemmes résument bien le choix cornélien des étudiants et des enseignants. Elle leur impose une liberté de ne pas utiliser. Mais cette liberté de ne pas utiliser, elle, ne relève-t-elle pas d’abord et avant tout d’un jugement social ?

L’IA générative ne sera pas le grand égalisateur social !

C’est la piste fructueuse qu’explore Bilel Benbouzid dans la seconde partie de son article. En explorant qui à recours à l’IA et pourquoi, le sociologue permet d’entrouvrir une autre réponse que la réponse morale. Ceux qui promeuvent l’usage de l’IA pour les étudiants, comme Ethan Mollick, estiment que l’IA pourrait agir comme une égaliseur de chances, permettant de réduire les différences cognitives entre les élèves. C’est là une référence aux travaux d’Erik Brynjolfsson, Generative AI at work, qui souligne que l’IA diminue le besoin d’expérience, permet la montée en compétence accélérée des travailleurs et réduit les écarts de compétence des travailleurs (une théorie qui a été en partie critiquée, notamment parce que ces avantages sont compensés par l’uniformisation des pratiques et leur surveillance – voir ce que nous en disions en mobilisant les travaux de David Autor). Mais sommes-nous confrontés à une homogénéisation des performances d’écritures ? N’assiste-t-on pas plutôt à un renforcement des inégalités entre les meilleurs qui sauront mieux que d’autres tirer partie de l’IA générative et les moins pourvus socialement ?

Pour John Danaher, l’IA générative pourrait redéfinir pas moins que l’égalité, puisque les compétences traditionnelles (rédaction, programmation, analyses…) permettraient aux moins dotés d’égaler les meilleurs. Pour Danaher, le risque, c’est que l’égalité soit alors reléguée au second plan : « d’autres valeurs comme l’efficacité économique ou la liberté individuelle prendraient le dessus, entraînant une acceptation accrue des inégalités. L’efficacité économique pourrait être mise en avant si l’IA permet une forte augmentation de la productivité et de la richesse globale, même si cette richesse est inégalement répartie. Dans ce scénario, plutôt que de chercher à garantir une répartition équitable des ressources, la société pourrait accepter des écarts grandissants de richesse et de statut, tant que l’ensemble progresse. Ce serait une forme d’acceptation de l’inégalité sous prétexte que la technologie génère globalement des bénéfices pour tous, même si ces bénéfices ne sont pas partagés de manière égale. De la même manière, la liberté individuelle pourrait être privilégiée si l’IA permet à chacun d’accéder à des outils puissants qui augmentent ses capacités, mais sans garantir que tout le monde en bénéficie de manière équivalente. Certains pourraient considérer qu’il est plus important de laisser les individus utiliser ces technologies comme ils le souhaitent, même si cela crée de nouvelles hiérarchies basées sur l’usage différencié de l’IA ». Pour Danaher comme pour Benbouzid, l’intégration de l’IA dans l’enseignement doit poser la question de ses conséquences sociales !

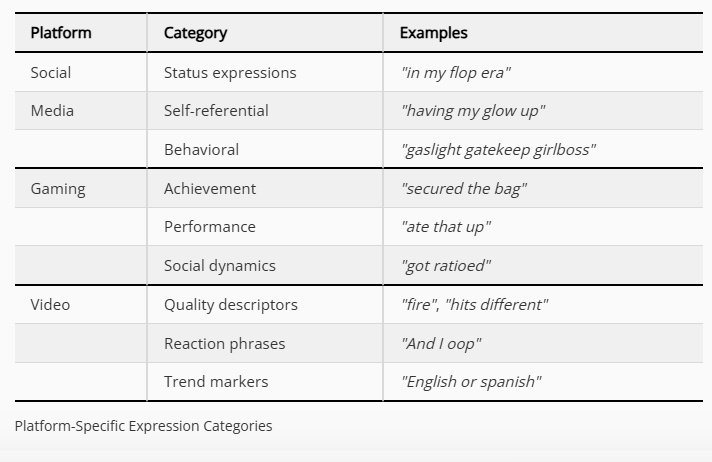

Les LLM ne produisent pas un langage neutre mais tendent à reproduire les « les normes linguistiques dominantes des groupes sociaux les plus favorisés », rappelle Bilel Benbouzid. Une étude comparant les lettres de motivation d’étudiants avec des textes produits par des IA génératives montre que ces dernières correspondent surtout à des productions de CSP+. Pour Benbouzid, le risque est que la délégation de l’écriture à ces machines renforce les hiérarchies existantes plus qu’elles ne les distribue. D’où l’enjeu d’une enquête en cours pour comprendre l’usage de l’IA générative des étudiants et leur rapport social au langage.

Les premiers résultats de cette enquête montrent par exemple que les étudiants rechignent à copier-collé directement le texte créé par les IA, non seulement par peur de sanctions, mais plus encore parce qu’ils comprennent que le ton et le style ne leur correspondent pas. « Les étudiants comparent souvent ChatGPT à l’aide parentale. On comprend que la légitimité ne réside pas tant dans la nature de l’assistance que dans la relation sociale qui la sous-tend. Une aide humaine, surtout familiale, est investie d’une proximité culturelle qui la rend acceptable, voire valorisante, là où l’assistance algorithmique est perçue comme une rupture avec le niveau académique et leur propre maîtrise de la langue ». Et effectivement, la perception de l’apport des LLM dépend du capital culturel des étudiants. Pour les plus dotés, ChatGPT est un outil utilitaire, limité voire vulgaire, qui standardise le langage. Pour les moins dotés, il leur permet d’accéder à des éléments de langages valorisés et valorisants, tout en l’adaptant pour qu’elle leur corresponde socialement.

Dans ce rapport aux outils de génération, pointe un rapport social à la langue, à l’écriture, à l’éducation. Pour Benbouzid, l’utilisation de l’IA devient alors moins un problème moral qu’un dilemme social. « Ces pratiques, loin d’être homogènes, traduisent une appropriation différenciée de l’outil en fonction des trajectoires sociales et des attentes symboliques qui structurent le rapport social à l’éducation. Ce qui est en jeu, finalement, c’est une remise en question de la manière dont les étudiants se positionnent socialement, lorsqu’ils utilisent les robots conversationnels, dans les hiérarchies culturelles et sociales de l’université. » En fait, les étudiants utilisent les outils non pas pour se dépasser, comme l’estime Mollick, mais pour produire un contenu socialement légitime. « En déléguant systématiquement leurs compétences de lecture, d’analyse et d’écriture à ces modèles, les étudiants peuvent contourner les processus essentiels d’intériorisation et d’adaptation aux normes discursives et épistémologiques propres à chaque domaine. En d’autres termes, l’étudiant pourrait perdre l’occasion de développer authentiquement son propre capital culturel académique, substitué par un habitus dominant produit artificiellement par l’IA. »

L’apparence d’égalité instrumentale que permettent les LLM pourrait donc paradoxalement renforcer une inégalité structurelle accrue. Les outils creusant l’écart entre des étudiants qui ont déjà internalisé les normes dominantes et ceux qui les singent. Le fait que les textes générés manquent d’originalité et de profondeur critique, que les IA produisent des textes superficiels, ne rend pas tous les étudiants égaux face à ces outils. D’un côté, les grandes écoles renforcent les compétences orales et renforcent leurs exigences d’originalité face à ces outils. De l’autre, d’autres devront y avoir recours par nécessité. « Pour les mieux établis, l’IA représentera un outil optionnel d’optimisation ; pour les plus précaires, elle deviendra une condition de survie dans un univers concurrentiel. Par ailleurs, même si l’IA profitera relativement davantage aux moins qualifiés, cette amélioration pourrait simultanément accentuer une forme de dépendance technologique parmi les populations les plus défavorisées, creusant encore le fossé avec les élites, mieux armées pour exercer un discernement critique face aux contenus générés par les machines ».

Bref, loin de l’égalisation culturelle que les outils permettraient, le risque est fort que tous n’en profitent pas d’une manière égale. On le constate très bien ailleurs. Le fait d’être capable de rédiger un courrier administratif est loin d’être partagé. Si ces outils améliorent les courriers des moins dotés socialement, ils ne renversent en rien les différences sociales. C’est le même constat qu’on peut faire entre ceux qui subliment ces outils parce qu’ils les maîtrisent finement, et tous les autres qui ne font que les utiliser, comme l’évoquait Gregory Chatonsky, en distinguant les utilisateurs mémétiques et les utilisateurs productifs. Ces outils, qui se présentent comme des outils qui seraient capables de dépasser les inégalités sociales, risquent avant tout de mieux les amplifier. Plus que de permettre de personnaliser l’apprentissage, pour s’adapter à chacun, il semble que l’IA donne des superpouvoirs d’apprentissage à ceux qui maîtrisent leurs apprentissages, plus qu’aux autres.

L’IApocalypse scolaire, coincée dans le droit

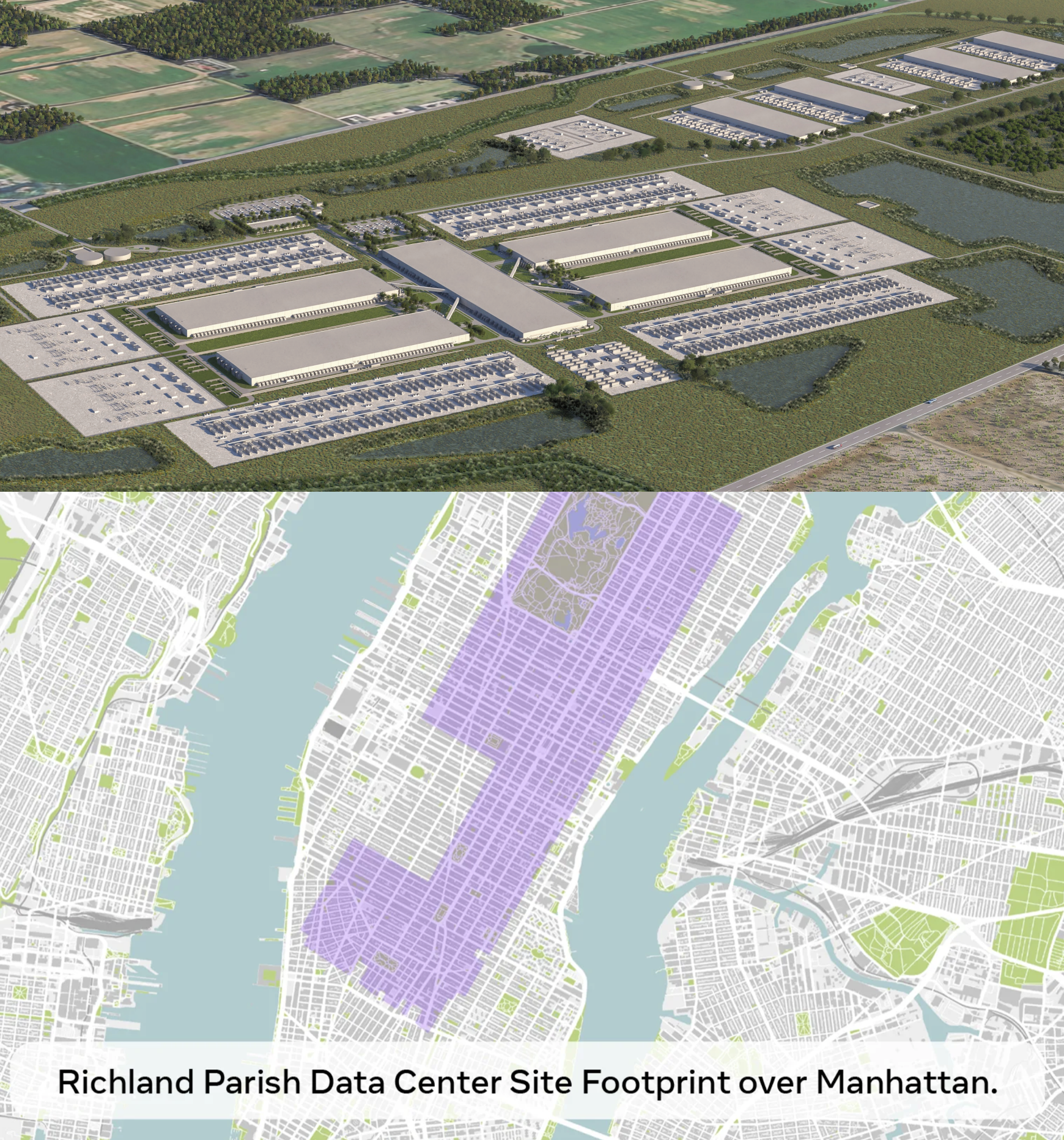

Les questions de l’usage de l’IA à l’école que nous avons tenté de dérouler dans ce dossier montrent l’enjeu à débattre d’une politique publique d’usage de l’IA générative à l’école, du primaire au supérieur. Mais, comme le montre notre enquête, toute la communauté éducative est en attente d’un cadre. En France, on attend les recommandations de la mission confiée à François Taddéi et Sarah Cohen-Boulakia sur les pratiques pédagogiques de l’IA dans l’enseignement supérieur, rapportait le Monde.

Un premier cadre d’usage de l’IA à l’école vient pourtant d’être publié par le ministère de l’Education nationale. Autant dire que ce cadrage processuel n’est pas du tout à la hauteur des enjeux. Le document consiste surtout en un rappel des règles et, pour l’essentiel, elles expliquent d’abord que l’usage de l’IA générative est contraint si ce n’est impossible, de fait. « Aucun membre du personnel ne doit demander aux élèves d’utiliser des services d’IA grand public impliquant la création d’un compte personnel » rappelle le document. La note recommande également de ne pas utiliser l’IA générative avec les élèves avant la 4e et souligne que « l’utilisation d’une intelligence artificielle générative pour réaliser tout ou partie d’un devoir scolaire, sans autorisation explicite de l’enseignant et sans qu’elle soit suivie d’un travail personnel d’appropriation à partir des contenus produits, constitue une fraude ». Autant dire que ce cadre d’usage ne permet rien, sinon l’interdiction. Loin d’être un cadre de développement ouvert à l’envahissement de l’IA, comme s’en plaint le SNES-FSU, le document semble surtout continuer à produire du déni, tentant de rappeler des règles sur des usages qui les débordent déjà très largement.

Sur Linked-in, Yann Houry, prof dans un Institut privé suisse, était très heureux de partager sa recette pour permettre aux profs de corriger des copies avec une IA en local, rappelant que pour des questions de légalité et de confidentialité, les professeurs ne devraient pas utiliser les services d’IA génératives en ligne pour corriger les copies. Dans les commentaires, nombreux sont pourtant venu lui signaler que cela ne suffit pas, rappelant qu’utiliser l’IA pour corriger les copies, donner des notes et classer les élèves peut-être classée comme un usage à haut-risque selon l’IA Act, ou encore qu’un formateur qui utiliserait l’IA en ce sens devrait en informer les apprenants afin qu’ils exercent un droit de recours en cas de désaccord sur une évaluation, sans compter que le professeur doit également être transparent sur ce qu’il utilise pour rester en conformité et l’inscrire au registre des traitements. Bref, d’un côté comme de l’autre, tant du côté des élèves qui sont renvoyé à la fraude quelque soit la façon dont ils l’utilisent, que des professeurs, qui ne doivent l’utiliser qu’en pleine transparence, on se rend vite compte que l’usage de l’IA dans l’éducation reste, formellement, très contraint, pour ne pas dire impossible.

D’autres cadres et rapports ont été publiés. comme celui de l’inspection générale, du Sénat ou de la Commission européenne et de l’OCDE, mais qui se concentrent surtout sur ce qu’un enseignement à l’IA devrait être, plus que de donner un cadre aux débordements des usages actuels. Bref, pour l’instant, le cadrage de l’IApocalypse scolaire reste à construire, avec les professeurs… et avec les élèves.

Hubert Guillaud