L’AI Now Institute vient de publier son rapport 2025. Et autant dire, qu’il frappe fort. “La trajectoire actuelle de l’IA ouvre la voie à un avenir économique et politique peu enviable : un avenir qui prive de leurs droits une grande partie du public, rend les systèmes plus obscurs pour ceux qu’ils affectent, dévalorise notre savoir-faire, compromet notre sécurité et restreint nos perspectives d’innovation”.

La bonne nouvelle, c’est que la voie offerte par l’industrie technologique n’est pas la seule qui s’offre à nous. “Ce rapport explique pourquoi la lutte contre la vision de l’IA défendue par l’industrie est un combat qui en vaut la peine”. Comme le rappelait leur rapport 2023, l’IA est d’abord une question de concentration du pouvoir entre les mains de quelques géants. “La question que nous devrions nous poser n’est pas de savoir si ChatGPT est utile ou non, mais si le pouvoir irréfléchi d’OpenAI, lié au monopole de Microsoft et au modèle économique de l’économie technologique, est bénéfique à la société”.

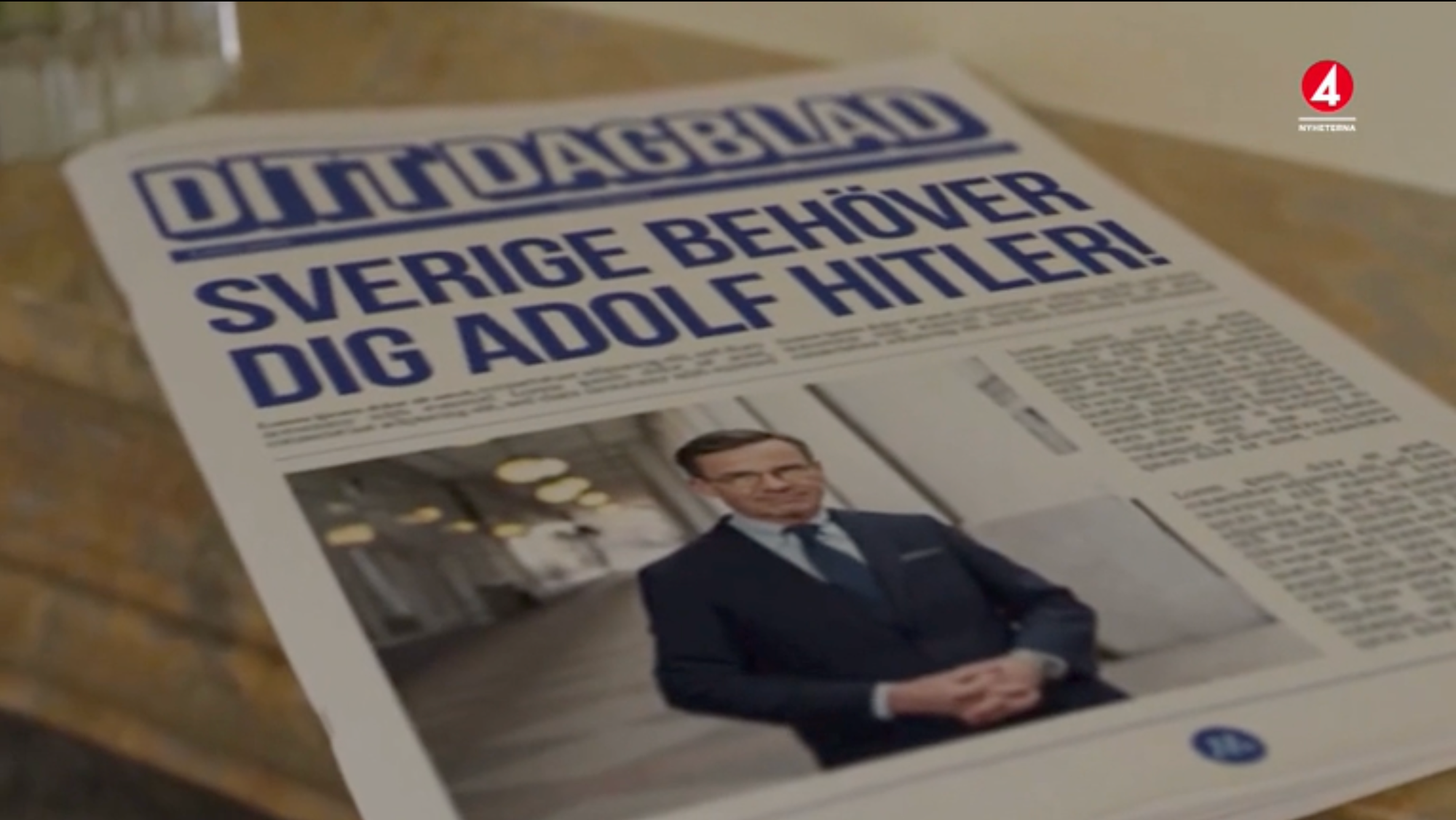

“L’avènement de ChatGPT en 2023 ne marque pas tant une rupture nette dans l’histoire de l’IA, mais plutôt le renforcement d’un paradigme du « plus c’est grand, mieux c’est », ancré dans la perpétuation des intérêts des entreprises qui ont bénéficié du laxisme réglementaire et des faibles taux d’intérêt de la Silicon Valley”. Mais ce pouvoir ne leur suffit pas : du démantèlement des gouvernements au pillage des données, de la dévalorisation du travail pour le rendre compatible à l’IA, à la réorientation des infrastructures énergétiques en passant par le saccage de l’information et de la démocratie… l’avènement de l’IA exige le démantèlement de nos infrastructures sociales, politiques et économiques au profit des entreprises de l’IA. L’IA remet au goût du jour des stratégies anciennes d’extraction d’expertises et de valeurs pour concentrer le pouvoir entre les mains des extracteurs au profit du développement de leurs empires.

Mais pourquoi la société accepterait-elle un tel compromis, une telle remise en cause ? Pour les chercheurs.ses de l’AI Now Institute ce pouvoir doit et peut être perturbé, notamment parce qu’il est plus fragile qu’il n’y paraît. “Les entreprises d’IA perdent de l’argent pour chaque utilisateur qu’elles gagnent” et le coût de l’IA à grande échelle va être très élevé au risque qu’une bulle d’investissement ne finisse par éclater. L’affirmation de la révolution de l’IA générative, elle, contraste avec la grande banalité de ses intégrations et les difficultés qu’elle engendre : de la publicité automatisée chez Meta, à la production de code via Copilot (au détriment des compétences des développeurs), ou via la production d’agents IA, en passant par l’augmentation des prix du Cloud par l’intégration automatique de fonctionnalités IA… tout en laissant les clients se débrouiller des hallucinations, des erreurs et des imperfactions de leurs produits. Or, appliqués en contexte réel les systèmes d’IA échouent profondément même sur des tâches élémentaires, rappellent les auteurs du rapport : les fonctionnalités de l’IA relèvent souvent d’illusions sur leur efficacité, masquant bien plus leurs défaillances qu’autre chose, comme l’expliquent les chercheurs Inioluwa Deborah Raji, Elizabeth Kumar, Aaron Horowitz et Andrew D. Selbst. Dans de nombreux cas d’utilisation, “l’IA est déployée par ceux qui ont le pouvoir contre ceux qui n’en ont pas” sans possibilité de se retirer ou de demander réparation en cas d’erreur.

L’IA : un outil défaillant au service de ceux qui la déploie

Pour l’AI Now Institute, les avantages de l’IA sont à la fois surestimés et sous-estimés, des traitements contre le cancer à une hypothétique croissance économique, tandis que certains de ses défauts sont réels, immédiats et se répandent. Le solutionnisme de l’IA occulte les problèmes systémiques auxquels nos économies sont confrontées, occultant la concentration économique à l’oeuvre et servant de canal pour le déploiement de mesures d’austérité sous prétexte d’efficacité, à l’image du très problématique chatbot mis en place par la ville New York. Des millions de dollars d’argent public ont été investis dans des solutions d’IA défaillantes. “Le mythe de la productivité occulte une vérité fondamentale : les avantages de l’IA profitent aux entreprises, et non aux travailleurs ou au grand public. Et L’IA agentive rendra les lieux de travail encore plus bureaucratiques et surveillés, réduisant l’autonomie au lieu de l’accroître”.

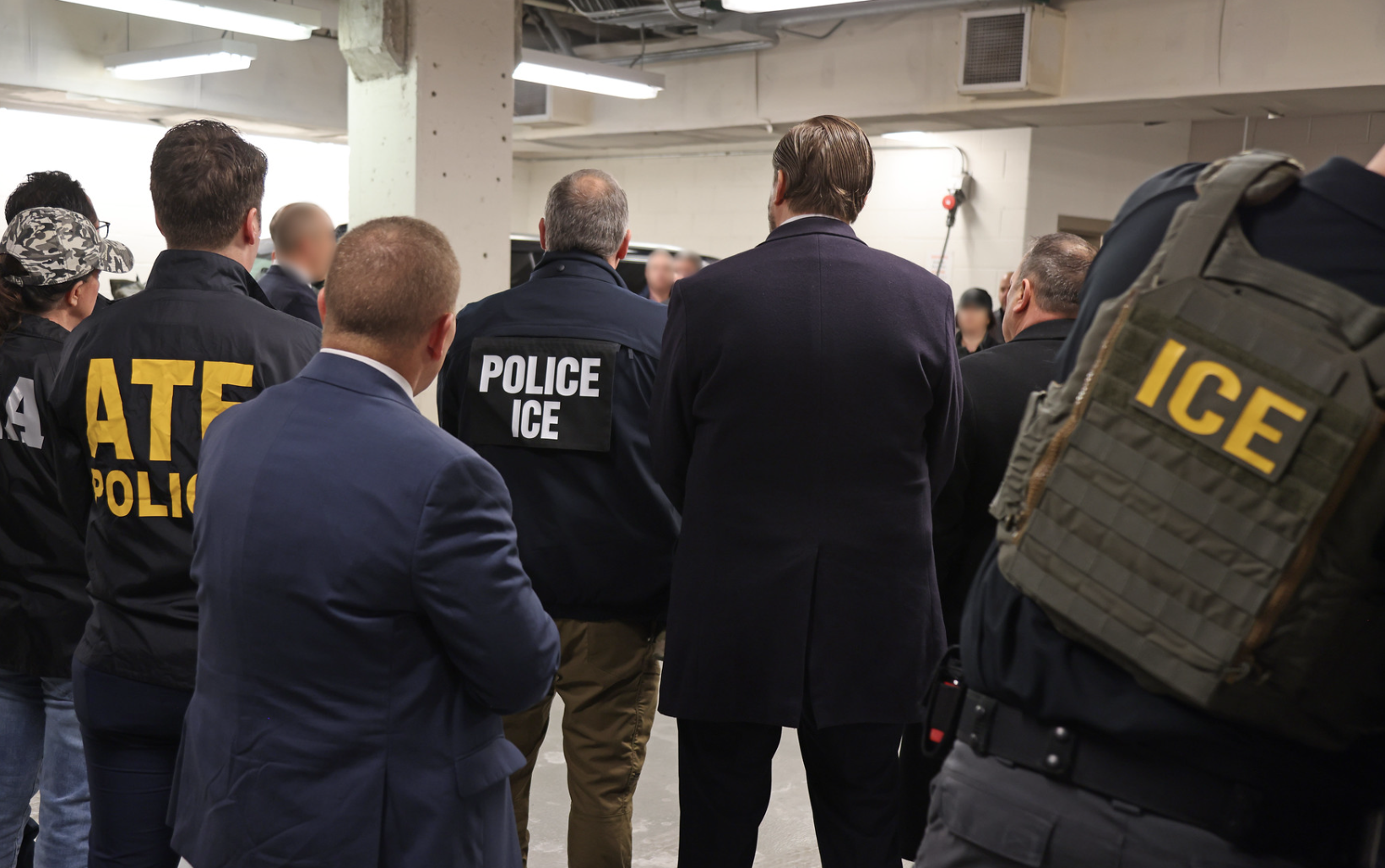

“L’utilisation de l’IA est souvent coercitive”, violant les droits et compromettant les procédures régulières à l’image de l’essor débridé de l’utilisation de l’IA dans le contrôle de l’immigration aux Etats-Unis (voir notre article sur la fin du cloisonnement des données ainsi que celui sur l’IA générative, nouvelle couche d’exploitation du travail). Le rapport consacre d’ailleurs tout un chapitre aux défaillances de l’IA. Pour les thuriféraires de l’IA, celle-ci est appelée à guérir tous nos maux, permettant à la fois de transformer la science, la logistique, l’éducation… Mais, si les géants de la tech veulent que l’IA soit accessible à tous, alors l’IA devrait pouvoir bénéficier à tous. C’est loin d’être le cas.

Le rapport prend l’exemple de la promesse que l’IA pourrait parvenir, à terme, à guérir les cancers. Si l’IA a bien le potentiel de contribuer aux recherches dans le domaine, notamment en améliorant le dépistage, la détection et le diagnostic. Il est probable cependant que loin d’être une révolution, les améliorations soient bien plus incrémentales qu’on le pense. Mais ce qui est contestable dans ce tableau, estiment les chercheurs de l’AI Now Institute, c’est l’hypothèse selon laquelle ces avancées scientifiques nécessitent la croissance effrénée des hyperscalers du secteur de l’IA. Or, c’est précisément le lien que ces dirigeants d’entreprise tentent d’établir. « Le prétexte que l’IA pourrait révolutionner la santé sert à promouvoir la déréglementation de l’IA pour dynamiser son développement ». Les perspectives scientifiques montées en promesses inéluctables sont utilisées pour abattre les résistances à discuter des enjeux de l’IA et des transformations qu’elle produit sur la société toute entière.

Or, dans le régime des défaillances de l’IA, bien peu de leurs promesses relèvent de preuves scientifiques. Nombre de recherches du secteur s’appuient sur un régime de “véritude” comme s’en moque l’humoriste Stephen Colbert, c’est-à-dire sur des recherches qui ne sont pas validées par les pairs, à l’image des robots infirmiers qu’a pu promouvoir Nvidia en affirmant qu’ils surpasseraient les infirmières elles-mêmes… Une affirmation qui ne reposait que sur une étude de Nvidia. Nous manquons d’une science de l’évaluation de l’IA générative. En l’absence de benchmarks indépendants et largement reconnus pour mesurer des attributs clés tels que la précision ou la qualité des réponses, les entreprises inventent leurs propres benchmarks et, dans certains cas, vendent à la fois le produit et les plateformes de validation des benchmarks au même client. Par exemple, Scale AI détient des contrats de plusieurs centaines de millions de dollars avec le Pentagone pour la production de modèles d’IA destinés au déploiement militaire, dont un contrat de 20 millions de dollars pour la plateforme qui servira à évaluer la précision des modèles d’IA destinés aux agences de défense. Fournir la solution et son évaluation est effectivement bien plus simple.

Autre défaillance systémique : partout, les outils marginalisent les professionnels. Dans l’éducation, les Moocs ont promis la démocratisation de l’accès aux cours. Il n’en a rien été. Désormais, le technosolutionnisme promet la démocratisation par l’IA générative via des offres dédiées comme ChatGPT Edu d’OpenAI, au risque de compromettre la finalité même de l’éducation. En fait, rappellent les auteurs du rapport, dans l’éducation comme ailleurs, l’IA est bien souvent adoptée par des administrateurs, sans discussion ni implication des concernés. A l’université, les administrateurs achètent des solutions non éprouvées et non testées pour des sommes considérables afin de supplanter les technologies existantes gérées par les services technologiques universitaires. Même constat dans ses déploiements au travail, où les pénuries de main d’œuvre sont souvent évoquées comme une raison pour développer l’IA, alors que le problème n’est pas tant la pénurie que le manque de protection ou le régime austéritaire de bas salaires. Les solutions technologiques permettent surtout de rediriger les financements au détriment des travailleurs et des bénéficiaires. L’IA sert souvent de vecteur pour le déploiement de mesures d’austérité sous un autre nom. Les systèmes d’IA appliqués aux personnes à faibles revenus n’améliorent presque jamais l’accès aux prestations sociales ou à d’autres opportunités, disait le rapport de Techtonic Justice. “L’IA n’est pas un ensemble cohérent de technologies capables d’atteindre des objectifs sociaux complexes”. Elle est son exact inverse, explique le rapport en pointant par exemple les défaillances du Doge (que nous avons nous-mêmes documentés). Cela n’empêche pourtant pas le solutionnisme de prospérer. L’objectif du chatbot newyorkais par exemple, “n’est peut-être pas, en réalité, de servir les citoyens, mais plutôt d’encourager et de centraliser l’accès aux données des citoyens ; de privatiser et d’externaliser les tâches gouvernementales ; et de consolider le pouvoir des entreprises sans mécanismes de responsabilisation significatifs”, comme l’explique le travail du Surveillance resistance Lab, très opposé au projet.

Le mythe de la productivité enfin, que répètent et anônnent les développeurs d’IA, nous fait oublier que les bénéfices de l’IA vont bien plus leur profiter à eux qu’au public. « La productivité est un euphémisme pour désigner la relation économique mutuellement bénéfique entre les entreprises et leurs actionnaires, et non entre les entreprises et leurs salariés. Non seulement les salariés ne bénéficient pas des gains de productivité liés à l’IA, mais pour beaucoup, leurs conditions de travail vont surtout empirer. L’IA ne bénéficie pas aux salariés, mais dégrade leurs conditions de travail, en augmentant la surveillance, notamment via des scores de productivité individuels et collectifs. Les entreprises utilisent la logique des gains de productivité de l’IA pour justifier la fragmentation, l’automatisation et, dans certains cas, la suppression du travail. » Or, la logique selon laquelle la productivité des entreprises mènera inévitablement à une prospérité partagée est profondément erronée. Par le passé, lorsque l’automatisation a permis des gains de productivité et des salaires plus élevés, ce n’était pas grâce aux capacités intrinsèques de la technologie, mais parce que les politiques des entreprises et les réglementations étaient conçues de concert pour soutenir les travailleurs et limiter leur pouvoir, comme l’expliquent Daron Acemoglu et Simon Johnson, dans Pouvoir et progrès (Pearson 2024). L’essor de l’automatisation des machines-outils autour de la Seconde Guerre mondiale est instructif : malgré les craintes de pertes d’emplois, les politiques fédérales et le renforcement du mouvement ouvrier ont protégé les intérêts des travailleurs et exigé des salaires plus élevés pour les ouvriers utilisant les nouvelles machines. Les entreprises ont à leur tour mis en place des politiques pour fidéliser les travailleurs, comme la redistribution des bénéfices et la formation, afin de réduire les turbulences et éviter les grèves. « Malgré l’automatisation croissante pendant cette période, la part des travailleurs dans le revenu national est restée stable, les salaires moyens ont augmenté et la demande de travailleurs a augmenté. Ces gains ont été annulés par les politiques de l’ère Reagan, qui ont donné la priorité aux intérêts des actionnaires, utilisé les menaces commerciales pour déprécier les normes du travail et les normes réglementaires, et affaibli les politiques pro-travailleurs et syndicales, ce qui a permis aux entreprises technologiques d’acquérir une domination du marché et un contrôle sur des ressources clés. L’industrie de l’IA est un produit décisif de cette histoire ». La discrimination salariale algorithmique optimise les salaires à la baisse. D’innombrables pratiques sont mobilisées pour isoler les salariés et contourner les lois en vigueur, comme le documente le rapport 2025 de FairWork. La promesse que les agents IA automatiseront les tâches routinières est devenue un point central du développement de produits, même si cela suppose que les entreprises qui s’y lancent deviennent plus processuelles et bureaucratiques pour leur permettre d’opérer. Enfin, nous interagissons de plus en plus fréquemment avec des technologies d’IA utilisées non pas par nous, mais sur nous, qui façonnent notre accès aux ressources dans des domaines allant de la finance à l’embauche en passant par le logement, et ce au détriment de la transparence et au détriment de la possibilité même de pouvoir faire autrement.

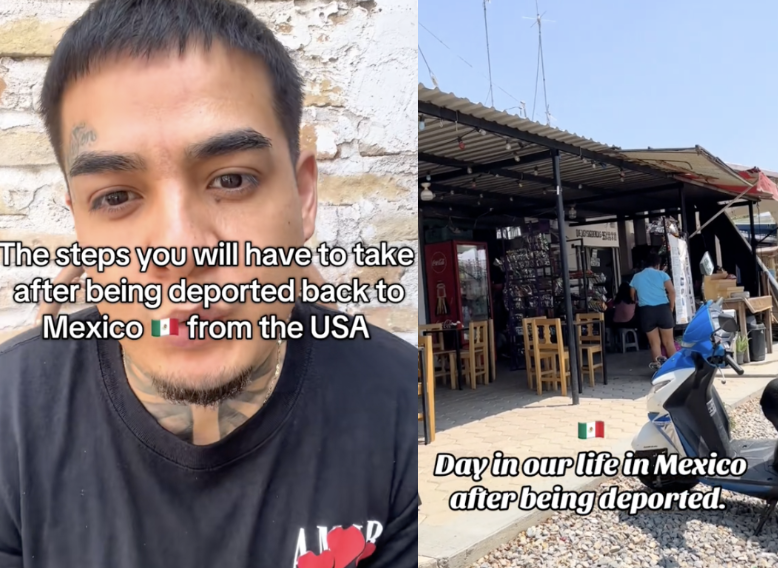

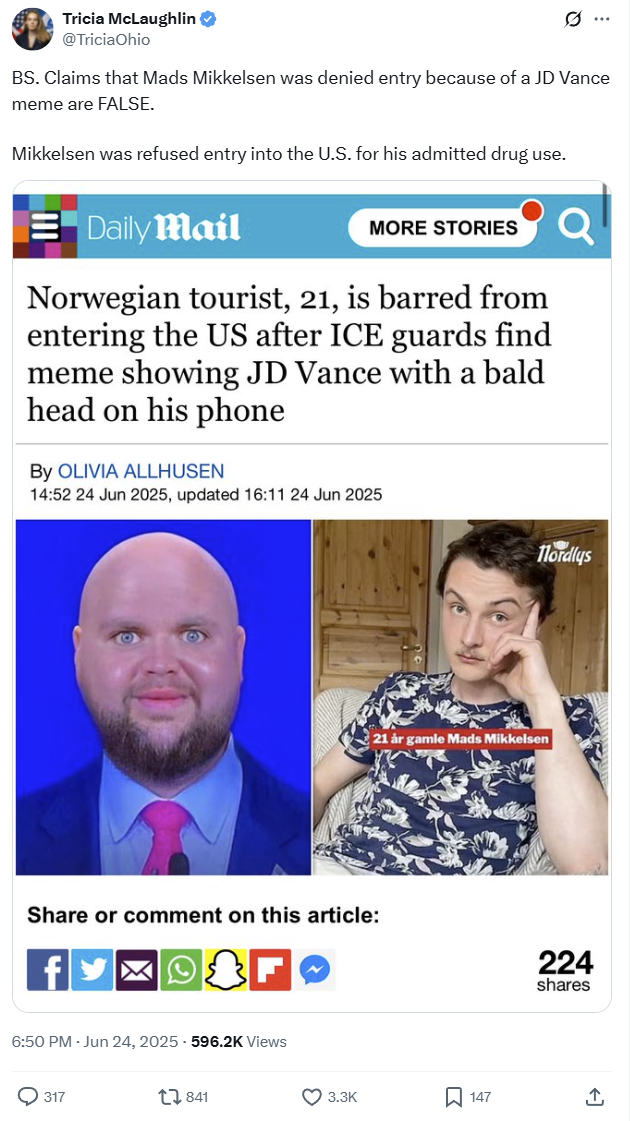

Le risque de l’IA partout est bien de nous soumettre aux calculs, plus que de nous en libérer. Par exemple, l’intégration de l’IA dans les agences chargées de l’immigration, malgré l’édiction de principes d’utilisation vertueux, montre combien ces principes sont profondément contournés, comme le montrait le rapport sur la déportation automatisée aux Etats-Unis du collectif de défense des droits des latino-américains, Mijente. Les Services de citoyenneté et d’immigration des États-Unis (USCIS) utilisent des outils prédictifs pour automatiser leurs prises de décision, comme « Asylum Text Analytics », qui interroge les demandes d’asile afin de déterminer celles qui sont frauduleuses. Ces outils ont démontré, entre autres défauts, des taux élevés d’erreurs de classification lorsqu’ils sont utilisés sur des personnes dont l’anglais n’est pas la langue maternelle. Les conséquences d’une identification erronée de fraude sont importantes : elles peuvent entraîner l’expulsion, l’interdiction à vie du territoire américain et une peine d’emprisonnement pouvant aller jusqu’à dix ans. « Pourtant, la transparence pour les personnes concernées par ces systèmes est plus que limitée, sans possibilité de se désinscrire ou de demander réparation lorsqu’ils sont utilisés pour prendre des décisions erronées, et, tout aussi important, peu de preuves attestent que l’efficacité de ces outils a été, ou peut être, améliorée ».

Malgré la légalité douteuse et les failles connues de nombre de ces systèmes que le rapport documente, l’intégration de l’IA dans les contrôles d’immigration ne semble vouée qu’à s’intensifier. L’utilisation de ces outils offre un vernis d’objectivité qui masque non seulement un racisme et une xénophobie flagrants, mais aussi la forte pression politique exercée sur les agences d’immigration pour restreindre l’asile. « L‘IA permet aux agences fédérales de mener des contrôles d’immigration de manière profondément et de plus en plus opaque, ce qui complique encore davantage la tâche des personnes susceptibles d’être arrêtées ou accusées à tort. Nombre de ces outils ne sont connus du public que par le biais de documents juridiques et ne figurent pas dans l’inventaire d’IA du DHS. Mais même une fois connus, nous disposons de très peu d’informations sur leur étalonnage ou sur les données sur lesquelles ils sont basés, ce qui réduit encore davantage la capacité des individus à faire valoir leurs droits à une procédure régulière. Ces outils s’appuient également sur une surveillance invasive du public, allant du filtrage des publications sur les réseaux sociaux à l’utilisation de la reconnaissance faciale, de la surveillance aérienne et d’autres techniques de surveillance, à l’achat massif d’informations publiques auprès de courtiers en données ». Nous sommes à la fois confrontés à des systèmes coercitifs et opaques, foncièrement défaillants. Mais ces défaillances se déploient parce qu’elles donnent du pouvoir aux forces de l’ordre, leur permettant d’atteindre leurs objectifs d’expulsion et d’arrestation. Avec l’IA, le pouvoir devient l’objectif.

Les leviers pour renverser l’empire de l’IA et faire converger les luttes contre son monde

La dernière partie du rapport de l’AI Now Institute tente de déployer une autre vision de l’IA par des propositions, en dessinant une feuille de route pour l’action. “L’IA est une lutte de pouvoir et non un levier de progrès”, expliquent les auteurs qui invitent à “reprendre le contrôle de la trajectoire de l’IA”, en contestant son utilisation actuelle. Le rapport présente 5 leviers pour reprendre du pouvoir sur l’IA.

Démontrer que l’IA agit contre les intérêts des individus et de la société

Le premier objectif, pour reprendre la main, consiste à mieux démontrer que l’industrie de l’IA agit contre les intérêts des citoyens ordinaires. Mais ce discours est encore peu partagé, notamment parce que le discours sur les risques porte surtout sur les biais techniques ou les risques existentiels, des enjeux déconnectés des réalités matérielles des individus. Pour l’AI Now Institute, “nous devons donner la priorité aux enjeux politiques ancrés dans le vécu des citoyens avec l’IA”, montrer les systèmes d’IA comme des infrastructures invisibles qui régissent les vies de chacun. En cela, la résistance au démantèlement des agences publiques initiée par les politiques du Doge a justement permis d’ouvrir un front de résistance. La résistance et l’indignation face aux coupes budgétaires et à l’accaparement des données a permis de montrer qu’améliorer l’efficacité des services n’était pas son objectif, que celui-ci a toujours été de démanteler les services gouvernementaux et centraliser le pouvoir. La dégradation des services sociaux et la privation des droits est un moyen de remobilisation à exploiter.

La construction des data centers pour l’IA est également un nouvel espace de mobilisation locale pour faire progresser la question de la justice environnementale, à l’image de celles que tentent de faire entendre la Citizen Action Coalition de l’Indiana ou la Memphis Community Against Pollution dans le Tennessee.

La question de l’augmentation des prix et de l’inflation, et le développements de prix et salaires algorithmiques est un autre levier de mobilisation, comme le montrait un rapport de l’AI Now Institute sur le sujet datant de février qui invitait à l’interdiction pure et simple de la surveillance individualisée des prix et des salaires.

Faire progresser l’organisation des travailleurs

Le second levier consiste à faire progresser l’organisation des travailleurs. Lorsque les travailleurs et leurs syndicats s’intéressent sérieusement à la manière dont l’IA transforme la nature du travail et s’engagent résolument par le biais de négociations collectives, de l’application des contrats, de campagnes et de plaidoyer politique, ils peuvent influencer la manière dont leurs employeurs développent et déploient ces technologies. Les campagnes syndicales visant à contester l’utilisation de l’IA générative à Hollywood, les mobilisations pour dénoncer la gestion algorithmique des employés des entrepôts de la logistique et des plateformes de covoiturage et de livraison ont joué un rôle essentiel dans la sensibilisation du public à l’impact de l’IA et des technologies de données sur le lieu de travail. La lutte pour limiter l’augmentation des cadences dans les entrepôts ou celles des chauffeurs menées par Gig Workers Rising, Los Deliversistas Unidos, Rideshare Drivers United, ou le SEIU, entre autres, a permis d’établir des protections, de lutter contre la précarité organisée par les plateformes… Pour cela, il faut à la fois que les organisations puissent analyser l’impact de l’IA sur les conditions de travail et sur les publics, pour permettre aux deux luttes de se rejoindre à l’image de ce qu’à accompli le syndicat des infirmières qui a montré que le déploiement de l’IA affaiblit le jugement clinique des infirmières et menace la sécurité des patients. Cette lutte a donné lieu à une « Déclaration des droits des infirmières et des patients », un ensemble de principes directeurs visant à garantir une application juste et sûre de l’IA dans les établissements de santé. Les infirmières ont stoppé le déploiement d’EPIC Acuity, un système qui sous-estimait l’état de santé des patients et le nombre d’infirmières nécessaires, et ont contraint l’entreprise qui déployait le système à créer un comité de surveillance pour sa mise en œuvre.

Une autre tactique consiste à contester le déploiement d’IA austéritaires dans le secteur public à l’image du réseau syndicaliste fédéral, qui mène une campagne pour sauver les services fédéraux et met en lumière l’impact des coupes budgétaires du Doge. En Pennsylvanie, le SEIU a mis en place un conseil des travailleurs pour superviser le déploiement de solutions d’IA génératives dans les services publics.

Une autre tactique consiste à mener des campagnes plus globales pour contester le pouvoir des grandes entreprises technologiques, comme la Coalition Athena qui demande le démantèlement d’Amazon, en reliant les questions de surveillance des travailleurs, le fait que la multinationale vende ses services à la police, les questions écologiques liées au déploiement des plateformes logistiques ainsi que l’impact des systèmes algorithmiques sur les petites entreprises et les prix que payent les consommateurs.

Bref, l’enjeu est bien de relier les luttes entre elles, de relier les syndicats aux organisations de défense de la vie privée à celles œuvrant pour la justice raciale ou sociale, afin de mener des campagnes organisées sur ces enjeux. Mais également de l’étendre à l’ensemble de la chaîne de valeur et d’approvisionnement de l’IA, au-delà des questions américaines, même si pour l’instant “aucune tentative sérieuse d’organisation du secteur impacté par le déploiement de l’IA à grande échelle n’a été menée”. Des initiatives existent pourtant comme l’Amazon Employees for Climate Justice, l’African Content Moderators Union ou l’African Tech Workers Rising, le Data Worker’s Inquiry Project, le Tech Equity Collaborative ou l’Alphabet Workers Union (qui font campagne sur les différences de traitement entre les employés et les travailleurs contractuels).

Nous avons désespérément besoin de projets de lutte plus ambitieux et mieux dotés en ressources, constate le rapport. Les personnes qui construisent et forment les systèmes d’IA – et qui, par conséquent, les connaissent intimement – ont une opportunité particulière d’utiliser leur position de pouvoir pour demander des comptes aux entreprises technologiques sur la manière dont ces systèmes sont utilisés. “S’organiser et mener des actions collectives depuis ces postes aura un impact profond sur l’évolution de l’IA”.

“À l’instar du mouvement ouvrier du siècle dernier, le mouvement ouvrier d’aujourd’hui peut se battre pour un nouveau pacte social qui place l’IA et les technologies numériques au service de l’intérêt public et oblige le pouvoir irresponsable d’aujourd’hui à rendre des comptes.”

Confiance zéro envers les entreprises de l’IA !

Le troisième levier que défend l’AI Now Institute est plus radical encore puisqu’il propose d’adopter un programme politique “confiance zéro” envers l’IA. En 2023, L’AI Now, l’Electronic Privacy Information Center et d’Accountable Tech affirmaient déjà “qu’une confiance aveugle dans la bienveillance des entreprises technologiques n’était pas envisageable ». Pour établir ce programme, le rapport égraine 6 leviers à activer.

Tout d’abord, le rapport plaide pour “des règles audacieuses et claires qui restreignent les applications d’IA nuisibles”. C’est au public de déterminer si, dans quels contextes et comment, les systèmes d’IA seront utilisés. “Comparées aux cadres reposant sur des garanties basées sur les processus (comme les audits d’IA ou les régimes d’évaluation des risques) qui, dans la pratique, ont souvent eu tendance à renforcer les pouvoirs des leaders du secteur et à s’appuyer sur une solide capacité réglementaire pour une application efficace, ces règles claires présentent l’avantage d’être facilement administrables et de cibler les préjudices qui ne peuvent être ni évités ni réparés par de simples garanties”. Pour l’AI Now Institute, l’IA doit être interdite pour la reconnaissance des émotions, la notation sociale, la fixation des prix et des salaires, refuser des demandes d’indemnisation, remplacer les enseignants, générer des deepfakes. Et les données de surveillance des travailleurs ne doivent pas pouvoir pas être vendues à des fournisseurs tiers. L’enjeu premier est d’augmenter le spectre des interdictions.

Ensuite, le rapport propose de réglementer tout le cycle de vie de l’IA. L’IA doit être réglementée tout au long de son cycle de développement, de la collecte des données au déploiement, en passant par le processus de formation, le perfectionnement et le développement des applications, comme le proposait l’Ada Lovelace Institute. Le rapport rappelle que si la transparence est au fondement d’une réglementation efficace, la résistante des entreprises est forte, tout le long des développements, des données d’entraînement utilisées, aux fonctionnement des applications. La transparence et l’explication devraient être proactives, suggère le rapport : les utilisateurs ne devraient pas avoir besoin de demander individuellement des informations sur les traitements dont ils sont l’objet. Notamment, le rapport insiste sur le besoin que “les développeurs documentent et rendent publiques leurs techniques d’atténuation des risques, et que le régulateur exige la divulgation de tout risque anticipé qu’ils ne sont pas en mesure d’atténuer, afin que cela soit transparent pour les autres acteurs de la chaîne d’approvisionnement”. Le rapport recommande également d’inscrire un « droit de dérogation » aux décisions et l’obligation d’intégrer des conseils d’usagers pour qu’ils aient leur mot à dire sur les développements et l’utilisation des systèmes.

Le rapport rappelle également que la supervision des développements doit être indépendante. Ce n’est pas à l’industrie d’évaluer ce qu’elle fait. Le “red teaming” et les “models cards” ignorent les conflits d’intérêts en jeu et mobilisent des méthodologies finalement peu robustes (voir notre article). Autre levier encore, s’attaquer aux racines du pouvoir de ces entreprises et par exemple qu’elles suppriment les données acquises illégalement et les modèles entraînés sur ces données (certains chercheurs parlent d’effacement de modèles et de destruction algorithmique !) ; limiter la conservation des données pour le réentraînement ; limiter les partenariats entre les hyperscalers et les startups d’IA et le rachat d’entreprise pour limiter la constitution de monopoles.

Le rapport propose également de construire une boîte à outils pour favoriser la concurrence. De nombreuses enquêtes pointent les limites des grandes entreprises de la tech à assurer le respect du droit à la concurrence, mais les poursuites peinent à s’appliquer et peinent à construire des changements législatifs pour renforcer le droit à la concurrence et limiter la construction de monopoles, alors que toute intervention sur le marché est toujours dénoncé par les entreprises de la tech comme relevant de mesures contre l’innovation. Le rapport plaide pour une plus grande séparation structurelle des activités (les entreprises du cloud ne doivent pas pouvoir participer au marché des modèles fondamentaux de l’IA par exemple, interdiction des représentations croisées dans les conseils d’administration des startups et des développeurs de modèles, etc.). Interdire aux fournisseurs de cloud d’exploiter les données qu’ils obtiennent de leurs clients en hébergeant des infrastructures pour développer des produits concurrents.

Enfin, le rapport recommande une supervision rigoureuse du développement et de l’exploitation des centres de données, alors que les entreprises qui les développent se voient exonérées de charge et que leurs riverains en subissent des impacts disproportionnés (concurrence sur les ressources, augmentation des tarifs de l’électricité…). Les communautés touchées ont besoin de mécanismes de transparence et de protections environnementales solides. Les régulateurs devraient plafonner les subventions en fonction des protections concédées et des emplois créés. Initier des règles pour interdire de faire porter l’augmentation des tarifs sur les usagers.

Décloisonner !

Le cloisonnement des enjeux de l’IA est un autre problème qu’il faut lever. C’est le cas notamment de l’obsession à la sécurité nationale qui justifient à la fois des mesures de régulation et des programmes d’accélération et d’expansion du secteur et des infrastructures de l’IA. Mais pour décloisonner, il faut surtout venir perturber le processus de surveillance à l’œuvre et renforcer la vie privée comme un enjeu de justice économique. La montée de la surveillance pour renforcer l’automatisation “place les outils traditionnels de protection de la vie privée (tels que le consentement, les options de retrait, les finalités non autorisées et la minimisation des données) au cœur de la mise en place de conditions économiques plus justes”. La chercheuse Ifeoma Ajunwa soutient que les données des travailleurs devraient être considérées comme du « capital capturé » par les entreprises : leurs données sont utilisées pour former des technologies qui finiront par les remplacer (ou créer les conditions pour réduire leurs salaires), ou vendues au plus offrant via un réseau croissant de courtiers en données, sans contrôle ni compensation. Des travailleurs ubérisés aux travailleurs du clic, l’exploitation des données nécessite de repositionner la protection de la vie privée des travailleurs au cœur du programme de justice économique pour limiter sa capture par l’IA. Les points de collecte, les points de surveillance, doivent être “la cible appropriée de la résistance”, car ils seront instrumentalisés contre les intérêts des travailleurs. Sur le plan réglementaire, cela pourrait impliquer de privilégier des règles de minimisation des données qui restreignent la collecte et l’utilisation des données, renforcer la confidentialité (par exemple en interdisant le partage de données sur les salariés avec des tiers), le droit à ne pas consentir, etc. Renforcer la minimisation, sécuriser les données gouvernementales sur les individus qui sont de haute qualité et particulièrement sensibles, est plus urgent que jamais.

“Nous devons nous réapproprier l’agenda positif de l’innovation centrée sur le public, et l’IA ne devrait pas en être le centre”, concluent les auteurs. La trajectoire actuelle de l’IA, axée sur le marché, est préjudiciable au public alors que l’espace de solutions alternatives se réduit. Nous devons rejeter le paradigme d’une IA à grande échelle qui ne profitera qu’aux plus puissants.

L’IA publique demeure un espace fertile pour promouvoir le débat sur des trajectoires alternatives pour l’IA, structurellement plus alignées sur l’intérêt public, et garantir que tout financement public dans ce domaine soit conditionné à des objectifs d’intérêt général. Mais pour cela, encore faut-il que l’IA publique ne limite pas sa politique à l’achat de solutions privées, mais développe ses propres capacités d’IA, réinvestisse sa capacité d’expertise pour ne pas céder au solutionnisme de l’IA, favorise partout la discussion avec les usagers, cultive une communauté de pratique autour de l’innovation d’intérêt général qui façonnera l’émergence d’un espace alternatif par exemple en exigeant des méthodes d’implication des publics et aussi en élargissant l’intérêt de l’Etat à celui de l’intérêt collectif et pas seulement à ses intérêts propres (par exemple en conditionnant à la promotion des objectifs climatiques, au soutien syndical et citoyen…), ainsi qu’à redéfinir les conditions concrètes du financement public de l’IA, en veillant à ce que les investissements répondent aux besoins des communautés plutôt qu’aux intérêts des entreprises.

Changer l’agenda : pour une IA publique !

Enfin, le rapport conclut en affirmant que l’innovation devrait être centrée sur les besoins des publics et que l’IA ne devrait pas en être le centre. Le développement de l’IA devrait être guidé par des impératifs non marchands et les capitaux publics et philanthropiques devraient contribuer à la création d’un écosystème d’innovation extérieur à l’industrie, comme l’ont réclamé Public AI Network dans un rapport, l’Ada Lovelace Institute, dans un autre, Lawrence Lessig ou encore Bruce Schneier et Nathan Sanders ou encore Ganesh Sitaraman et Tejas N. Narechania… qui parlent d’IA publique plus que d’IA souveraine, pour orienter les investissement non pas tant vers des questions de sécurité nationale et de compétitivité, mais vers des enjeux de justice sociale.

Ces discours confirment que la trajectoire de l’IA, axée sur le marché, est préjudiciable au public. Si les propositions alternatives ne manquent pas, elles ne parviennent pas à relever le défi de la concentration du pouvoir au profit des grandes entreprises. « Rejeter le paradigme actuel de l’IA à grande échelle est nécessaire pour lutter contre les asymétries d’information et de pouvoir inhérentes à l’IA. C’est la partie cachée qu’il faut exprimer haut et fort. C’est la réalité à laquelle nous devons faire face si nous voulons rassembler la volonté et la créativité nécessaires pour façonner la situation différemment ». Un rapport du National AI Research Resource (NAIRR) américain de 2021, d’une commission indépendante présidée par l’ancien PDG de Google, Eric Schmidt, et composée de dirigeants de nombreuses grandes entreprises technologiques, avait parfaitement formulé le risque : « la consolidation du secteur de l’IA menace la compétitivité technologique des États-Unis. » Et la commission proposait de créer des ressources publiques pour l’IA.

« L’IA publique demeure un espace fertile pour promouvoir le débat sur des trajectoires alternatives pour l’IA, structurellement plus alignées sur l’intérêt général, et garantir que tout financement public dans ce domaine soit conditionné à des objectifs d’intérêt général ». Un projet de loi californien a récemment relancé une proposition de cluster informatique public, hébergé au sein du système de l’Université de Californie, appelé CalCompute. L’État de New York a lancé une initiative appelée Empire AI visant à construire une infrastructure de cloud public dans sept institutions de recherche de l’État, rassemblant plus de 400 millions de dollars de fonds publics et privés. Ces deux initiatives créent des espaces de plaidoyer importants pour garantir que leurs ressources répondent aux besoins des communautés et ne servent pas à enrichir davantage les ressources des géants de la technologie.

Et le rapport de se conclure en appelant à défendre l’IA publique, en soutenant les universités, en investissant dans ces infrastructures d’IA publique et en veillant que les groupes défavorisés disposent d’une autorité dans ces projets. Nous devons cultiver une communauté de pratique autour de l’innovation d’intérêt général.

***

Le rapport de l’AI Now Institute a la grande force de nous rappeler que les luttes contre l’IA existent et qu’elles ne sont pas que des luttes de collectifs technocritiques, mais qu’elles s’incarnent déjà dans des projets politiques, qui peinent à s’interelier et à se structurer. Des luttes qui sont souvent invisibilisées, tant la parole est toute entière donnée aux promoteurs de l’IA. Le rapport est extrêmement riche et rassemble une documentation à nulle autre pareille.

« L’IA ne nous promet ni de nous libérer du cycle incessant de guerres, des pandémies et des crises environnementales et financières qui caractérisent notre présent », conclut le rapport L’IA ne crée rien de tout cela, ne créé rien de ce que nous avons besoin. “Lier notre avenir commun à l’IA rend cet avenir plus difficile à réaliser, car cela nous enferme dans une voie résolument sombre, nous privant non seulement de la capacité de choisir quoi construire et comment le construire, mais nous privant également de la joie que nous pourrions éprouver à construire un avenir différent”. L’IA comme seule perspective d’avenir “nous éloigne encore davantage d’une vie digne, où nous aurions l’autonomie de prendre nos propres décisions et où des structures démocratiquement responsables répartiraient le pouvoir et les infrastructures technologiques de manière robuste, responsable et protégée des chocs systémiques”. L’IA ne fait que consolider et amplifier les asymétries de pouvoir existantes. “Elle naturalise l’inégalité et le mérite comme une fatalité, tout en rendant les schémas et jugements sous-jacents qui les façonnent impénétrables pour ceux qui sont affectés par les jugements de l’IA”.

Pourtant, une autre IA est possible, estiment les chercheurs.ses de l’AI Now Institute. Nous ne pouvons pas lutter contre l’oligarchie technologique sans rejeter la trajectoire actuelle de l’industrie autour de l’IA à grande échelle. Nous ne devons pas oublier que l’opinion publique s’oppose résolument au pouvoir bien établi des entreprises technologiques. Certes, le secteur technologique dispose de ressources plus importantes que jamais et le contexte politique est plus sombre que jamais, concèdent les chercheurs de l’AI Now Institute. Cela ne les empêche pas de faire des propositions, comme d’adopter un programme politique de « confiance zéro » pour l’IA. Adopter un programme politique fondé sur des règles claires qui restreignent les utilisations les plus néfastes de l’IA, encadrent son cycle de vie de bout en bout et garantissent que l’industrie qui crée et exploite actuellement l’IA ne soit pas laissée à elle-même pour s’autoréguler et s’autoévaluer. Repenser les leviers traditionnels de la confidentialité des données comme outils clés dans la lutte contre l’automatisation et la lutte contre le pouvoir de marché.

Revendiquer un programme positif d’innovation centrée sur le public, sans IA au centre.

« La trajectoire actuelle de l’IA place le public sous la coupe d’oligarques technologiques irresponsables. Mais leur succès n’est pas inéluctable. En nous libérant de l’idée que l’IA à grande échelle est inévitable, nous pouvons retrouver l’espace nécessaire à une véritable innovation et promouvoir des voies alternatives stimulantes et novatrices qui exploitent la technologie pour façonner un monde au service du public et gouverné par notre volonté collective ».

La trajectoire actuelle de l’IA vers sa suprématie ne nous mènera pas au monde que nous voulons. Sa suprématie n’est pourtant pas encore là. “Avec l’adoption de la vision actuelle de l’IA, nous perdons un avenir où l’IA favoriserait des emplois stables, dignes et valorisants. Nous perdons un avenir où l’IA favoriserait des salaires justes et décents, au lieu de les déprécier ; où l’IA garantirait aux travailleurs le contrôle de l’impact des nouvelles technologies sur leur carrière, au lieu de saper leur expertise et leur connaissance de leur propre travail ; où nous disposons de politiques fortes pour soutenir les travailleurs si et quand les nouvelles technologies automatisent les fonctions existantes – y compris des lois élargissant le filet de sécurité sociale – au lieu de promoteurs de l’IA qui se vantent auprès des actionnaires des économies réalisées grâce à l’automatisation ; où des prestations sociales et des politiques de congés solides garantissent le bien-être à long terme des employés, au lieu que l’IA soit utilisée pour surveiller et exploiter les travailleurs à tout va ; où l’IA contribue à protéger les employés des risques pour la santé et la sécurité au travail, au lieu de perpétuer des conditions de travail dangereuses et de féliciter les employeurs qui exploitent les failles du marché du travail pour se soustraire à leurs responsabilités ; et où l’IA favorise des liens significatifs par le travail, au lieu de favoriser des cultures de peur et d’aliénation.”

Pour l’AI Now Institute, l’enjeu est d’aller vers une prospérité partagée, et ce n’est pas la direction que prennent les empires de l’IA. La prolifération de toute nouvelle technologie a le potentiel d’accroître les opportunités économiques et de conduire à une prospérité partagée généralisée. Mais cette prospérité partagée est incompatible avec la trajectoire actuelle de l’IA, qui vise à maximiser le profit des actionnaires. “Le mythe insidieux selon lequel l’IA mènera à la « productivité » pour tous, alors qu’il s’agit en réalité de la productivité d’un nombre restreint d’entreprises, nous pousse encore plus loin sur la voie du profit actionnarial comme unique objectif économique. Même les politiques gouvernementales bien intentionnées, conçues pour stimuler le secteur de l’IA, volent les poches des travailleurs. Par exemple, les incitations gouvernementales destinées à revitaliser l’industrie de la fabrication de puces électroniques ont été contrecarrées par des dispositions de rachat d’actions par les entreprises, envoyant des millions de dollars aux entreprises, et non aux travailleurs ou à la création d’emplois. Et malgré quelques initiatives significatives pour enquêter sur le secteur de l’IA sous l’administration Biden, les entreprises restent largement incontrôlées, ce qui signifie que les nouveaux entrants ne peuvent pas contester ces pratiques.”

“Cela implique de démanteler les grandes entreprises, de restructurer la structure de financement financée par le capital-risque afin que davantage d’entreprises puissent prospérer, d’investir dans les biens publics pour garantir que les ressources technologiques ne dépendent pas des grandes entreprises privées, et d’accroître les investissements institutionnels pour intégrer une plus grande diversité de personnes – et donc d’idées – au sein de la main-d’œuvre technologique.”

“Nous méritons un avenir technologique qui soutienne des valeurs et des institutions démocratiques fortes.” Nous devons de toute urgence restaurer les structures institutionnelles qui protègent les intérêts du public contre l’oligarchie. Cela nécessitera de s’attaquer au pouvoir technologique sur plusieurs fronts, et notamment par la mise en place de mesures de responsabilisation des entreprises pour contrôler les oligarques de la tech. Nous ne pouvons les laisser s’accaparer l’avenir.

Sur ce point, comme sur les autres, nous sommes d’accord.

Hubert Guillaud